Optimization Problem Types

- Why Convexity Matters

- Convex Optimization Problems

- Convex Functions

- Solving Convex Optimization Problems

- Other Problem Types

Why Convexity Matters

"...in fact, the great watershed in optimization isn't between linearity and nonlinearity, but convexity and nonconvexity."

- R. Tyrrell Rockafellar, in SIAM Review, 1993

Convex optimization problems are far more general than linear programming problems, but they share the desirable properties of LP problems: They can be solved quickly and reliably up to very large size -- hundreds of thousands of variables and constraints. The issue has been that, unless your objective and constraints were linear, it was difficult to determine whether or not they were convex. But Frontline System's Premium Solver Platform products includes an automated test for convexity of your problem functions.

Convex Optimization Problems

A convex optimization problem is a problem where all of the constraints are convex functions, and the objective is a convex function if minimizing, or a concave function if maximizing. Linear functions are convex, so linear programming problems are convex problems. Conic optimization problems -- the natural extension of linear programming problems -- are also convex problems. In a convex optimization problem, the feasible region -- the intersection of convex constraint functions -- is a convex region, as pictured below.

With a convex objective and a convex feasible region, there can be only one optimal solution, which is globally optimal. Several methods -- notably Interior Point methods -- will either find the globally optimal solution, or prove that there is no feasible solution to the problem. Convex problems can be solved efficiently up to very large size.

A non-convex optimization problem is any problem where the objective or any of the constraints are non-convex, as pictured below.

Such a problem may have multiple feasible regions and multiple locally optimal points within each region. It can take time exponential in the number of variables and constraints to determine that a non-convex problem is infeasible, that the objective function is unbounded, or that an optimal solution is the "global optimum" across all feasible regions.

Convex Functions

Geometrically, a function is convex if a line segment drawn from any point (x, f(x)) to another point (y, f(y)) -- called the chord from x to y -- lies on or above the graph of f, as in the picture below:

Algebraically, f s convex if, for any x and y, and any t between 0 and 1, f( tx + (1-t)y ) <= t f(x) + (1-t) f(y). A function is concave if -f is convex -- i.e. if the chord from x to y lies on or below the graph of f. It is easy to see that every linear function -- whose graph is a straight line -- is both convex and concave.

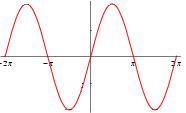

A non-convex function "curves up and down" -- it is neither convex nor concave. A familiar example is the sine function:

but note that this function is convex from -pi to 0, and concave from 0 to +pi. If the bounds on the variables restrict the domain of the objective and constraints to a region where the functions are convex, then the overall problem is convex.

Solving Convex Optimization Problems

Because of their desirable properties, convex optimization problems can be solved with a variety of methods. But Interior Point or Barrier methods are especially appropriate for convex problems, because they treat linear, quadratic, conic, and smooth nonlinear functions in essentially the same way -- they create and use a smooth convex nonlinear barrier function for the constraints, even for LP problems.

These methods make it practical to solve convex problems up to very large size, and they are especially effective on second order (quadratic and SOCP) problems, where the Hessians of the problem functions are constant. Both theoretical results and practical experience show that Interior Point methods require a relatively small number of iterations (typically less than 50) to reach an optimal solution, independent of the number of variables and constraints (though the computational effort per iteration rises with the number of variables and constraints). Interior Point methods have also benefited, more than other methods, from hardware advances -- instruction caching, pipelining, and other changes in processor architecture.

Frontline Systems Solver Technology for Convex Problems

All Frontline Systems Solvers are effective on convex problems with the appropriate types of problem functions (linear, quadratic, conic, or nonlinear). See Solver Technology for an overview of the available methods and Solver products.